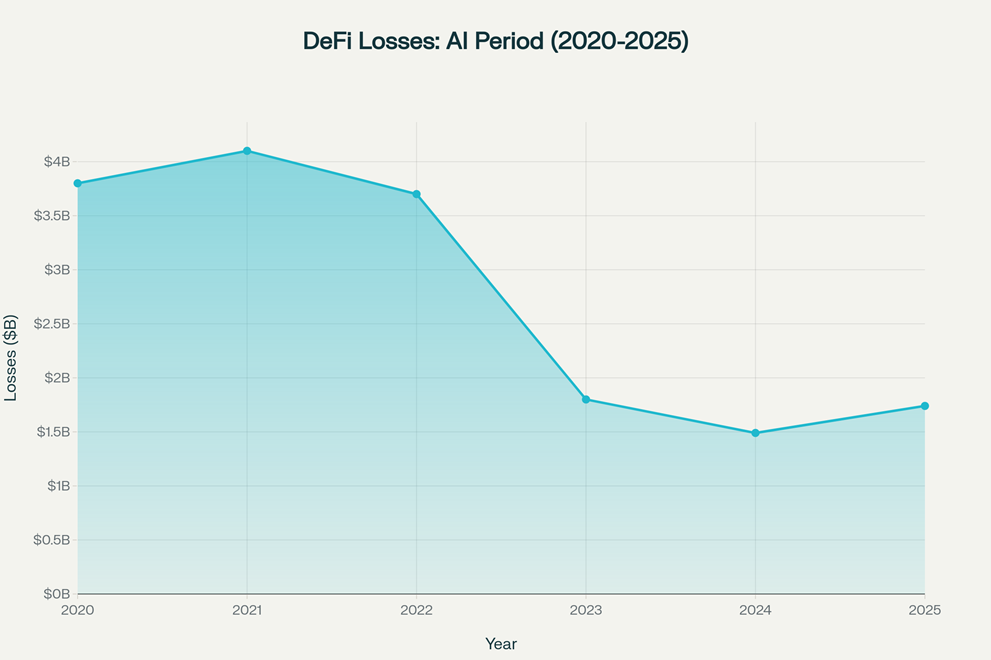

AI-powered smart contract auditing has emerged as DeFi’s most promising defense against an escalating threat landscape. After experiencing peak losses of $4.1 billion in 2021, the sector has gradually recovered as artificial intelligence tools have matured—yet 2025 already shows concerning signs that the industry’s technological arms race is far from over. The critical question isn’t whether AI can protect DeFi anymore; it’s whether AI’s rapidly advancing capabilities can outpace the ingenuity of attackers who are themselves leveraging machine learning to engineer increasingly sophisticated exploits. This analysis explores how AI is fundamentally reshaping blockchain security, from millisecond-speed vulnerability detection to autonomous monitoring systems that operate around the clock without human fatigue.

The Growing Threat of DeFi Exploits

If you’ve been in DeFi long enough, you’ve seen how bad one exploit can get. The numbers tell a sobering story. In April 2025 alone, the ecosystem suffered $92.45 million in losses across just 15 separate incidents—a single month that nearly matches all of Q4 2024’s total damage. Year-to-date through April 2025, total losses have already reached $1.74 billion, representing a shocking four-fold increase compared to the same period in 2024 when losses totaled $420 million. This accelerating trend suggests that despite billions invested in security infrastructure, the vulnerability surface in DeFi is expanding faster than our ability to defend it.

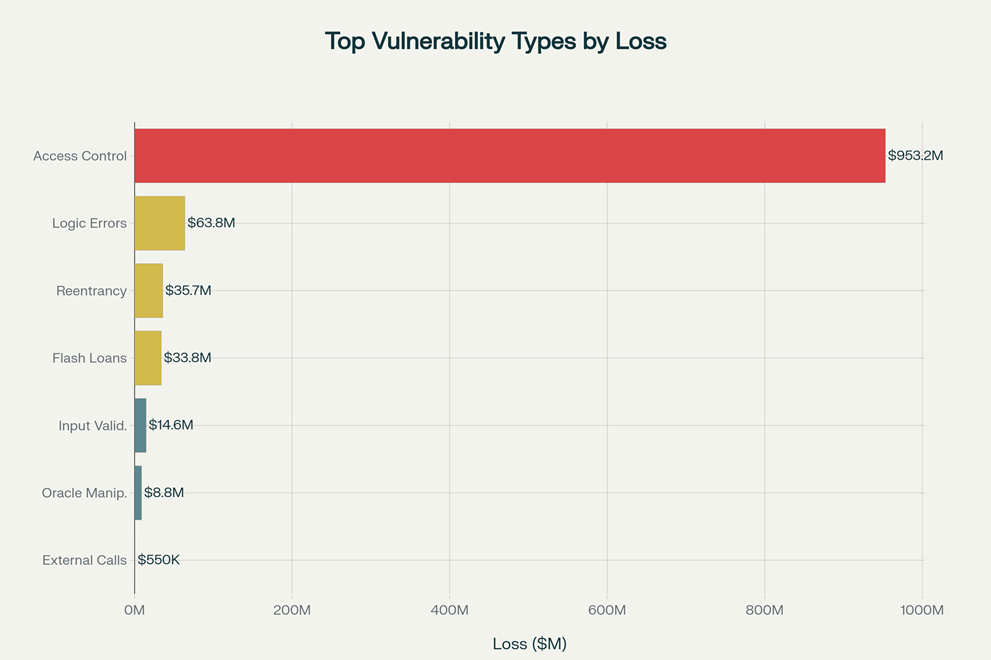

The threat landscape has evolved dramatically. Gone are the days when simple reentrancy attacks dominated exploit portfolios. Today’s attackers orchestrate multi-protocol assault chains—borrowing flash loans from Aave, manipulating prices across Uniswap, exploiting logic flaws in target protocols, and repaying everything in a single atomic transaction before any human can even notice something went wrong. The sophistication is genuinely breathtaking. A single vulnerability, when expertly weaponized, can drain millions in minutes. The Bunni DEX attack in September 2025 drained $8.4 million in mere hours through a surgical rounding-error exploit that sophisticated developers had overlooked. Similarly, the Cetus exploit in early 2025 extracted $223 million from a single liquidity calculation bug, showcasing how even cutting-edge protocols remain vulnerable despite extensive code review.

What makes this exponentially worse: traditional manual audits simply cannot keep pace. Expert auditors, no matter how brilliant, can review only a finite amount of code. They suffer from fatigue, context-switching costs, and the sheer cognitive burden of tracking state across dozens of interacting contracts. Frankly, the fact that a $8.4 million exploit happened to a well-audited protocol built by passionate developers is both impressive and terrifying. It reveals a fundamental limitation in human-based security: we were never designed to process complexity at blockchain scale. This is where AI enters the picture—not as a replacement for human judgment, but as a tireless augmentation that expands what’s possible when you combine silicon and expertise.

What Is AI Smart Contract Auditing?

At its core, AI smart contract auditing leverages machine learning, deep learning, and advanced pattern recognition to analyze smart contract code for vulnerabilities, logic flaws, and exploitable edge cases without requiring manual code review. Unlike traditional audits that rely on human inspectors methodically stepping through code, AI systems can scan, analyze, and score contracts in minutes instead of weeks, often detecting vulnerability patterns that humans miss entirely.

How does this work in practice? AI models are trained on massive datasets—historical audit reports, known vulnerability signatures, bytecode from real exploits, and blockchain transaction traces showing actual attacks in the wild. When an AI model encounters new code, it recognizes patterns indicative of danger. Is there an external call followed by state mutation? That’s a reentrancy red flag. Are complex mathematical operations happening without overflow checks? The model flags integer arithmetic vulnerabilities. Is there a price oracle being called without considering flash loan manipulation? The system detects economic attack vectors.

The most sophisticated platforms go much further. AuditAgent by Nethermind, for instance, combines static analysis (reading code without executing it) with dynamic analysis (simulating contract behavior under various conditions) and even employs multi-agent reasoning where specialized LLMs analyze contracts from both auditor and attacker perspectives simultaneously. ChainGPT’s AI Smart Contract Auditor processes code in seconds, generating security scores, optimization suggestions, and even contract summaries for readability—all cross-chain capable. Meanwhile, systems like SolidityScan can evaluate code against 100+ known vulnerability patterns, automatically categorize findings by severity, and suggest remediation steps.

The game-changing aspect: these tools democratize high-quality auditing. Historically, only well-funded protocols could afford top-tier security firms like OpenZeppelin, which charges premium rates. Now, even smaller DeFi projects can run AI-powered pre-audits that catch obvious flaws before paying humans to dig deeper. This layered approach—AI as first-pass screening, then human experts for contextual judgment—is becoming the industry standard because it’s economically sensible and security-effective.

How AI Detects Vulnerabilities Before Hackers Do

The magic of AI vulnerability detection lies in its multifaceted approach. Let’s walk through how it actually works when a developer uploads code to an AI audit platform.

Step One: Code Ingestion & Pattern Matching

The AI system receives Solidity, Vyper, or Rust code. Immediately, it tokenizes the code and runs it through multiple trained models that have seen thousands of real exploits. These models recognize dangerous patterns—like functions that transfer funds before updating balances, or external calls whose return values aren’t checked. Pattern recognition catches the low-hanging fruit in seconds. This isn’t magic; it’s pattern-matching at superhuman scale and speed. While a human auditor might take hours to scan a 5,000-line contract, AI scans and scores it in 60 seconds.

Step Two: Dynamic Simulation & Fuzz Testing

Static analysis only gets you so far. The real power emerges when AI simulates contract execution. Using fuzzing techniques—where thousands of random or strategic inputs are fed to the contract—the AI explores execution paths and identifies behaviors that shouldn’t be possible. Did that input combination cause an integer overflow? Did it expose a state inconsistency? The system catalogs these findings. Machine learning models trained on past exploits can predict which code paths are most likely to be attacked, focusing computational effort where it matters most.

Step Three: Anomaly Detection & Behavioral Analysis

Advanced AI systems go further and analyze cross-contract interactions. DeFiTail, a framework specifically designed for DeFi attacks, achieved 98.39% accuracy in detecting access control exploits and 97.43% accuracy in flash loan attacks by analyzing how contracts interact with each other across transaction flows. It doesn’t just look at isolated code—it maps the entire attack graph and identifies whether malicious actors could choreograph a profitable exploit through carefully ordered function calls.

Step Four: Economic Logic Verification

This is where AI begins transcending traditional static analysis. Tools like PromFuzz use large language models with dual-agent prompting strategies where one AI agent analyzes code from a security auditor’s perspective and another analyzes it from a malicious attacker’s perspective. By synthesizing both views, the system identifies functional bugs—subtle logic flaws where the code does exactly what it says, but the economic assumptions underlying the protocol are violated. A rounding error in an AMM’s price calculation might be mathematically correct but economically exploitable. PromFuzz achieved an astounding 86.96% recall rate and 93.02% F1-score in detecting functional bugs, demonstrating superiority over conventional methods by at least 50% in both metrics.

Real-World AI Audit Tools & Case Studies

The theoretical is already becoming practical. Several leading platforms are actively preventing exploits in real DeFi deployments.

CertiK’s Real-Time Monitoring

CertiK’s Skynet platform provides real-time transaction monitoring across blockchain networks, immediately flagging suspicious contract interactions that match known attack patterns. When transactions flow through the blockchain, Skynet analyzes them against a database of exploit signatures and behavioral baselines. If a transaction deviates significantly—like a contract suddenly calling obscure functions or moving funds to unexpected addresses—an alert fires. This isn’t retroactive forensics; this is prophetic detection.

Nethermind’s AuditAgent

AuditAgent has scanned over 80,700 lines of code, identifying 9,549 vulnerabilities with a 92% implementation rate for suggested fixes. Developers report that the tool saves them 4-6 hours per 1,000 lines of code compared to manual review. For rapidly moving DeFi teams, this isn’t a marginal gain—it’s transformational. The platform detects easy vulnerabilities with 45% sensitivity in basic mode and hard bugs at 18% sensitivity, scaling up to 79% accuracy on hard bugs when combined with human expert verification.

OpenZeppelin’s Defender + AI Integration

OpenZeppelin, which has audited over 1 million lines of code and uncovered 700+ critical vulnerabilities, is increasingly layering AI analysis into their Defender monitoring platform. Their proprietary Code Inspector tool alone detects over 60% of low-severity issues automatically, allowing human security researchers to focus effort on nuanced, context-dependent risks. This human-machine collaboration has proven highly effective for high-stakes protocols.

Real-World Prevention: The Curve Finance Lesson

One telling case involves the Curve Finance exploit of July 2023, where attackers drained $69 million by exploiting a reentrancy bug in the Vyper compiler itself—not even in Curve’s code directly. A properly tuned AI auditing system would have flagged that historical vulnerability in the compiler and alerted developers to use updated versions. Post-mortem analysis by CertiK revealed that 78.6% of reentrancy losses in 2023 traced back to this single compiler vulnerability—a pattern AI systems can now easily detect and prevent.

Challenges and Limitations of AI in Security

For all its promise, AI-powered auditing faces genuine constraints that practitioners need to understand. If you rely entirely on AI without human judgment, you’re trusting the blind to lead the blind.

The False Positive Problem

AI systems can flag code as vulnerable when it’s actually safe, or miss exploits by classifying them as benign. Getting this tuning right is difficult. If auditing tools generate excessive false positives, developers dismiss their warnings as noise. If they’re too conservative and miss real bugs, they create false confidence. This is the “cry wolf” trap—and it’s real in commercial AI security tools.

The Black-Box Interpretability Crisis

One of the thorniest challenges: AI models often can’t explain why they flagged something as vulnerable. Machine learning models work through pattern recognition in multidimensional spaces that humans can’t easily visualize. A neural network might identify a vulnerability with 95% confidence but be unable to articulate the causal reasoning to auditors or regulators. This creates accountability gaps. Investors distrust unexplained risk scores. Regulators demand transparency. Developers can’t fix what they don’t understand.

Training Data Bias

AI models trained on existing exploit datasets may overfit to historical attack patterns while missing novel exploits that don’t resemble past incidents. When attackers deliberately develop new attack vectors to evade detection systems, traditional models trained on historical data become less effective. This is the perpetual arms race—AI systems learn from yesterday’s attacks while tomorrow’s attackers are already engineering defenses against yesterday’s defenses.

Economic Logic Remains Partially Opaque

Even advanced AI struggles with deeply contextualized business logic vulnerabilities. A contract might be technically correct Solidity but economically unsound—vulnerable to subtle liquidation cascades, governance attacks, or MEV exploits that require understanding not just code but the entire DeFi composability graph. AI is improving here rapidly, but economic reasoning at scale remains partially in the “narrow AI” domain rather than approaching general intelligence.

The Oracle Problem

AI can’t be better than its training data. If all models are trained on published exploits and known vulnerability types, they may consistently miss novel attack vectors that don’t fit existing categories. The field of AI security is fundamentally reactive—we train on what has happened, not what could happen. This temporal lag means determined attackers with sufficient resources may always be several steps ahead.

The Future – Autonomous AI Security Agents for DeFi

The next frontier isn’t smarter audits. It’s autonomous AI security agents that operate as permanent, tireless guardians of DeFi protocols.

Imagine this scenario in 2026: Your DeFi protocol deploys an autonomous AI agent that continuously monitors every transaction, every price feed update, every governance action. The agent doesn’t just flag anomalies—it dynamically adjusts protocol parameters in real-time to defend against detected attacks. When it identifies a flash loan pattern combined with oracle manipulation, the agent automatically adjusts risk thresholds, pauses vulnerable functions, or triggers insurance mechanisms before losses cascade.

This isn’t science fiction—it’s already being explored. Autonomous AI security agents are transitioning from concept to deployment across leading protocols. These agents would be trained to recognize emerging exploit patterns, simulate attack scenarios continuously, and recommend preventive contract upgrades before vulnerabilities are exploited. Integration with decentralized insurance platforms could create a self-healing ecosystem where detected risks automatically trigger coverage mechanisms and remediation procedures.

The Artificial Superintelligence Alliance (ASI) combining Fetch.ai, SingularityNET, and Ocean Protocol represents this vision—creating decentralized AI infrastructure capable of learning across the entire DeFi landscape in real-time. Individual protocols would run specialized agents, but these agents would communicate and share threat intelligence, creating emergent collective security that’s greater than any single protocol’s defensive capability.

The competitive advantage will increasingly accrue to protocols that deploy such systems. Consider how this transforms protocol economics: lower security costs, faster innovation cycles, reduced insurance premiums, and genuine competitive moat through better attack detection than competitors. By 2027, expecting a major DeFi protocol to operate without autonomous AI monitoring will be like expecting a modern bank to operate without intrusion detection systems.

The Human Element: Auditors as AI Orchestrators

Here’s what people sometimes miss when they get dazzled by AI capabilities: the best security in 2025-2026 combines AI as tools with human expertise as strategy.

Leading security firms like OpenZeppelin aren’t replacing auditors—they’re multiplying their effectiveness. A human expert who previously spent 40 hours manually reading code now spends 4 hours understanding what the AI flagged, digging deeper into edge cases, and providing architectural guidance the AI can’t deliver. This is leverage. This is 10x productivity.

The auditor’s job is shifting. Rather than exhaustively reviewing code, auditors are becoming:

- AI Result Validators – Verifying that AI findings represent genuine vulnerabilities versus false alarms, requiring deep technical knowledge and judgment.

- Risk Strategists – Understanding the broader economic and composability risks that individual contracts face, threats no single AI system can model comprehensively.

- Red Team Designers – Collaborating with AI agents to design attack scenarios that stress-test assumptions, something that requires creative adversarial thinking.

This human-machine interface is precisely where the best security emerges. Frankly, the fact that AI can now audit faster than humans is both impressive and scary. But the fact that humans are still needed to interpret, validate, and strategize around AI findings is reassuring—it means the security landscape isn’t getting handed entirely to black-box algorithms.

Final Thoughts

The story of AI and DeFi security isn’t one of replacement—it’s one of exponential leverage. When we combine AI’s pattern recognition, speed, and tireless operation with human judgment, contextual understanding, and strategic thinking, something powerful emerges: DeFi may finally achieve the security posture necessary to support institutional capital at scale.

The data supports cautious optimism. Losses declined from $4.1 billion annually (2021) to $1.49 billion (2024) as AI adoption accelerated. While 2025 shows reversal, security researchers widely attribute this to emerging exploit sophistication that’s deliberately designed to evade current detection systems—exactly what we’d expect in an arms race between defense and attack. The question isn’t whether AI will prevent all DeFi hacks; it won’t. The question is whether AI, paired with better architectural practices and insurance mechanisms, can reduce total losses enough that DeFi becomes economically viable for institutional risk-averse capital.

Maybe the smartest move in DeFi isn’t chasing yield—it’s trusting the code that AI just verified. As this technology matures, the protocols that invest early in autonomous AI security infrastructure will gain competitive advantages that could reshape the entire landscape. The future of DeFi security isn’t human auditors vs. AI—it’s human auditors and AI agents working in concert, each compensating for the other’s blind spots, creating something neither could achieve alone.

For more in-depth AI and blockchain security analysis, explore additional insights at aicryptobrief.com.

Discover more from aiCryptoBrief.Com

Subscribe to get the latest posts sent to your email.